AI Interface Design

Best Practices for Transparent AI Interfaces

Transparent AI interfaces are essential: label AI outputs, explain decisions plainly, give users control, document data, and mitigate bias.

Transparency in AI is no longer optional - it’s a necessity. Clear, understandable AI interfaces build trust, empower users, and meet legal standards. But how can you design systems that are both transparent and user-friendly? Here’s what you need to know:

Explain AI Decisions Simply: Use plain language and tools to provide actionable, context-specific explanations. Avoid overwhelming users with technical jargon.

Make AI Presence Obvious: Clearly label AI-generated content and highlight system limitations or uncertainties. Visual cues like "Generated by AI" can help.

Offer User Control: Let users influence decisions with features like confidence scores, manual overrides, and feedback options.

Document AI Systems: Share details about training data, testing procedures, and limitations to ensure accountability and compliance.

Address Bias: Disclose potential risks, involve diverse perspectives, and use standardized frameworks like Model Cards or the NIST AI RMF.

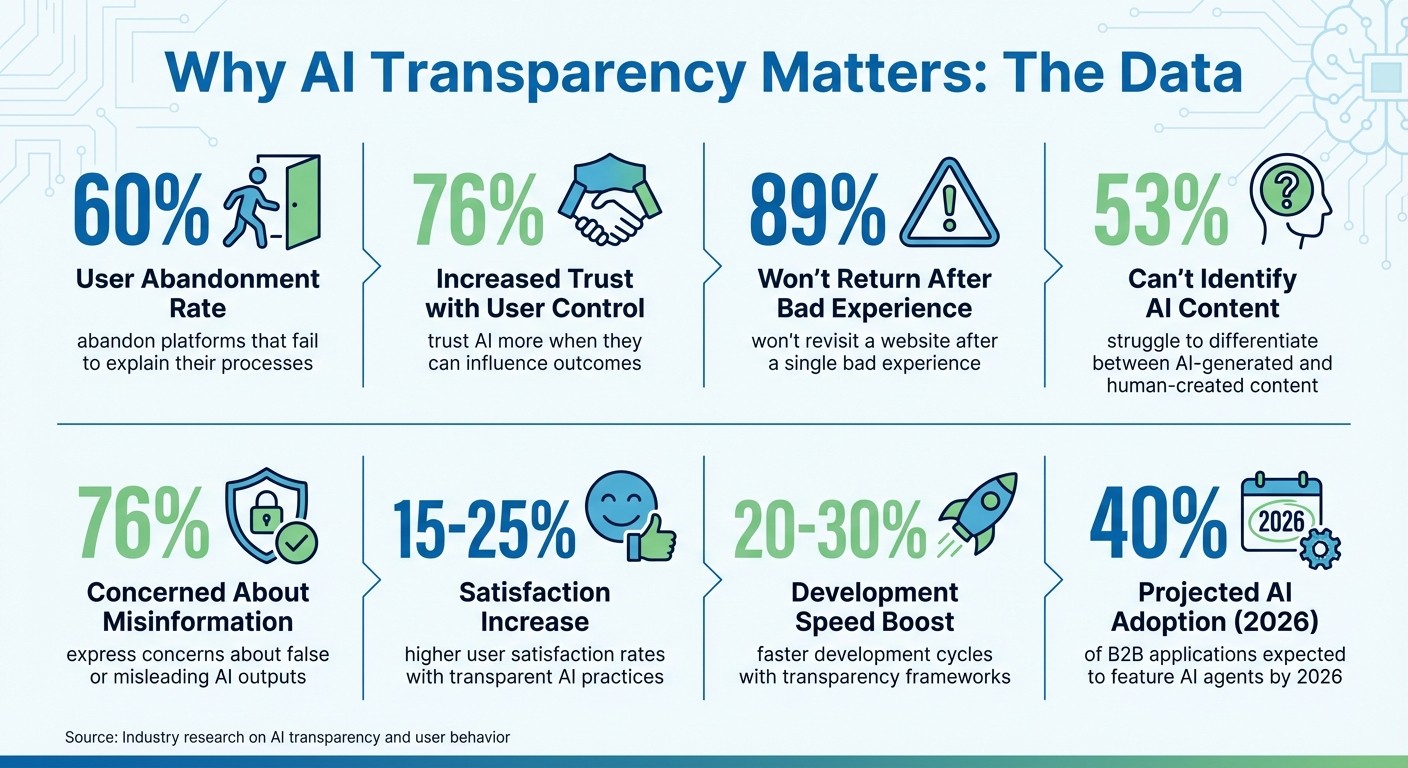

Why it matters: Research shows that 60% of users abandon platforms that fail to explain their processes, and 76% trust AI more when they can influence outcomes. With regulations tightening, transparency isn’t just good practice - it’s a competitive edge.

Key Statistics on AI Transparency and User Trust

Humanizing AI: Empowering users through transparent design by Brittney Muto

Best Practices for Designing Transparent AI Interfaces

Creating transparent AI interfaces means ensuring users can clearly see when AI is involved, understand how decisions are made, and retain control over outcomes - all while adhering to ethical principles.

Clearly Indicate AI Presence

It's crucial for users to recognize when AI is at work. A "seamful" design approach openly reveals AI's involvement and its boundaries, including uncertainties or limitations. This transparency builds trust by not masking the system's imperfections.

Visual cues like size, color, and contrast can emphasize AI-generated elements. For instance, a simple label such as "Generated by AI" can raise awareness. More advanced designs might visually represent confidence levels or flag when the system is nearing its operational limits.

Why is this so important? Research indicates that 60% of users will abandon a platform if it fails to keep them informed about its processes. The goal isn't to overwhelm users with information but to provide clarity at critical moments where AI's role directly impacts decisions.

Identifying AI elements clearly lays the groundwork for offering accessible and meaningful explanations.

Use Plain Language and Explainability Tools

Skip the technical jargon. Instead, use language that resonates with users' everyday experiences. For example, instead of saying, "The model optimized for feature vectors X and Y", explain it in relatable terms: "We considered your payment history and current income."

What really matters is actionable explanations - guidance users can act on. For instance, telling someone their loan application was denied due to "age" offers no solution. However, pointing to their "credit score" gives them a clear path for improvement. This concept, often referred to as recourse, shifts transparency from passive information-sharing to active problem-solving.

Tailor your explanations to the context. For individual decisions, provide specific insights. For broader understanding, offer system overviews or counterfactual examples that illustrate alternative outcomes.

Tools like SHAP and LIME can turn complex AI behaviors into digestible visualizations. However, keep it simple - bombarding users with technical details might lead to "algorithm aversion".

"Explainable AI (XAI) is a set of processes and methods that allows human users to comprehend and trust the results and output created by machine learning algorithms." - IBM

Enable User Control and Feedback

Transparency isn't just about understanding - it's also about giving users control. Studies show that 76% of users are more likely to trust an AI system when they can influence or adjust its outcomes. This means moving beyond explanations to offer actionable ways for users to intervene.

Confidence scores guide users on when to rely on the AI and when to trust their own judgment. For example, if the system shows low certainty, users know to approach the recommendation with caution. Similarly, manual overrides ensure humans retain authority over critical decisions.

Interactive features, such as "Why am I seeing this?" tooltips, let users explore the reasoning behind recommendations. Systems like "TalkTuner" go a step further, allowing users to view and edit assumptions made by the AI, such as age or education.

In line with seamful design principles, it's helpful to expose mismatches - like when AI expectations don't align with real-world data - so users can step in and correct course. For example, when the system operates outside its training data or encounters uncertainty, it should proactively suggest manual review instead of pretending everything is running smoothly.

"The purpose of explainability... is not to merely increase users' trust in the system's decisions, but to calibrate the users' level of trust to the correct level." - Wikipedia

Building Transparency into Design Systems

Incorporating transparency into your design system isn’t just a nice-to-have - it’s essential for maintaining user trust at every interaction. By embedding transparency into your system, you can ensure that AI interactions remain consistent and scalable.

Align with Established Frameworks

Two major frameworks can help guide your efforts toward transparent AI design: CLeAR and the NIST AI Risk Management Framework (AI RMF).

The CLeAR principles - Comparable, Legible, Actionable, and Robust - act as a practical checklist for creating transparent AI components. For instance, standardizing documentation makes it easier to compare models, while using plain language ensures clarity. These principles also emphasize actionable guidance, supported by automated updates to keep users informed.

Meanwhile, the NIST AI RMF, shaped over 18 months with contributions from more than 240 organizations, provides a governance framework structured around four core functions: Govern, Map, Measure, and Manage. This framework helps weave transparency requirements into existing developer workflows, such as CI/CD pipelines, design reviews, and documentation practices. This way, transparency becomes a natural part of the development process.

"Documentation should be mandatory in the creation, usage, and sale of datasets, models, and AI systems." – Kasia Chmielinski et al., The CLeAR Documentation Framework

Once these frameworks establish documentation-level transparency, the next step is to visually differentiate AI-driven content from human-generated elements.

Differentiating AI from Non-AI Content

Making it clear when AI is involved is critical to user trust. A well-designed system should ensure that users can instantly recognize AI-generated content. For example, Carbon’s design system uses an "AI label" with light-inspired effects to signal AI involvement and activate explainability features.

Carbon achieves this distinction using AI-specific design tokens, which transform standard components into unique AI variants. The system also includes a revert function, letting users toggle between AI-suggested content and their own edits. Users can even switch back to the AI version if needed. This functionality is particularly important, as studies show that 53% of consumers struggle to differentiate between AI-generated and human-created content, and 76% express concerns about the potential for false or misleading AI outputs.

"Transparency of AI presence is key to user trust. Having a consistent identity helps build awareness and anticipation of AI presence across our experiences." – Carbon Design System

To maintain clarity, AI-specific styling should only be used to indicate genuine AI involvement. Using these styles decoratively can dilute their meaning and confuse users.

At Exalt Studio, we apply these transparency principles to design systems, ensuring our AI interfaces are not only functional but also trustworthy and user-focused.

Ethical Disclosures for Transparent AI

Building trust in AI systems hinges on open communication about how they work and where their boundaries lie. Ethical disclosures play a key role in this process, protecting users while holding developers accountable. These practices go hand-in-hand with thoughtful design, strengthening user confidence through clear responsibility.

Disclose Training Data and Limitations

Sharing details about the origins and licensing of training data is essential for compliance and clarity. But transparency shouldn’t stop there - users need to understand how the data was gathered, processed, and labeled. It's also important to explain how representative the data is, particularly in terms of its cultural or regional relevance. For instance, if an AI model was trained primarily on North American English, users outside that context should know this to set realistic expectations for performance in their domain.

The CLeAR framework offers a practical way to structure this documentation. It emphasizes that information should be:

Comparable: Presented in a standardized format.

Legible: Written in straightforward, accessible language.

Actionable: Useful for making informed decisions.

Robust: Regularly updated to stay accurate.

"Transparency can be realized, in part, by providing information about how the data used to develop and evaluate the AI system was collected and processed, how AI models were built, trained, and fine-tuned, and how models and systems were evaluated and deployed." – Kasia Chmielinski, Sarah Newman, et al., The CLeAR Documentation Framework

Documenting the entire lifecycle - from initial data collection to fine-tuning - ensures that critical context isn’t lost. This approach also helps identify risks early, such as potential privacy issues or biased patterns embedded in the data.

Address Bias and Fair Outcomes

AI systems can mirror existing biases or even create new ones, with serious consequences. Examples of racial, gender, and disability bias demonstrate the potential for harm. It's vital to acknowledge these risks upfront, but without overwhelming users with excessive technical detail.

Strive for functional transparency - explain how the system behaves and its limitations - rather than diving into overly complex internal mechanics. Layered disclosures work well here: offer concise, user-friendly summaries while providing in-depth documentation for developers or auditors.

"Transparency is fundamentally about supporting appropriate human understanding, and this understanding is sought by different stakeholders with different goals in different contexts." – Q. Vera Liao and Jennifer Wortman Vaughan

Tools like Model Cards or FactSheets help simplify complex details into clear, comparable formats. These templates allow users to assess whether a system aligns with fairness expectations. Collaborating with diverse communities during development can further minimize bias and ensure fair outcomes.

At Exalt Studio, we embed these ethical principles into our AI designs, ensuring that transparency not only builds user trust but also aligns with compliance standards.

Conclusion

Transparent AI design isn't just a buzzword - it's a cornerstone for building trust and standing out in a crowded market. When users can clearly understand how AI makes decisions, they’re more likely to engage confidently and stick around. And here’s a telling statistic: nearly 89% of users won’t revisit a website after a single bad experience. That makes transparency not just important but essential for retaining users.

The strategies we’ve explored - like using clear indicators of AI presence, offering plain-language explanations, providing user control options, and ensuring ethical disclosures - help turn AI from a confusing "black box" into a trusted ally. Companies that adopt these practices have reported 15–25% higher user satisfaction rates and 20–30% faster development cycles. Transparency also strengthens accountability by making it clear who oversees the system's behavior, ensuring ethical standards are followed at every step of the AI process.

With AI becoming more autonomous and regulations like the EU AI Act tightening the rules, transparency is no longer optional - it’s becoming a competitive edge. By 2026, 40% of B2B applications are expected to feature task-focused AI agents, making compliance and trustworthiness even more critical. These transparency measures don’t just check regulatory boxes - they also enhance user engagement and market positioning. At Exalt Studio, we integrate these principles into every AI interface we design, ensuring your product meets ethical benchmarks and delivers the kind of seamless, trust-building experience users now demand.

FAQs

How does AI transparency build user trust and encourage engagement?

AI transparency plays a key role in building trust and encouraging interaction by making the system's processes easier to understand. When users can see how decisions are made - like which factors influence specific outcomes - they tend to feel more confident in the system and are more willing to engage with it. Offering clear explanations and insights into the AI's reasoning strengthens this trust even further.

Tools like transparent interfaces or dashboards that reveal system logic and user models help users grasp potential biases, adjust their actions, and feel more in control. This not only boosts their confidence but also motivates them to participate and provide feedback. In the end, transparency helps create a stronger bond between users and AI systems, leading to better interactions and results.

What are the best frameworks for designing transparent AI systems?

Designing AI systems that are easy to understand and trustworthy requires using frameworks that focus on clarity, accountability, and user trust. One notable approach is the Algorithmic Transparency Playbook, which offers practical ways to explain AI decisions in a way that makes sense to various stakeholders. It’s all about creating explanations that are both actionable and user-friendly.

Another helpful tool is the CLeAR Documentation Framework. This framework lays out detailed steps for documenting AI systems, ensuring they remain transparent and easy to use. It’s a guide that helps developers and organizations keep their AI solutions open and understandable.

On top of that, many experts highlight the importance of human-centered design principles. These principles focus on creating interactions with AI that are straightforward, honest, and tailored to the user. By doing so, they make it easier for people to grasp how AI works and feel confident in using it.

When combined, these frameworks and principles help organizations create AI systems that are not only ethical but also inspire trust and empower users.

Why is transparency important for reducing AI bias and ensuring fairness?

Transparency plays a crucial role in tackling AI bias and encouraging fair practices. When AI systems clearly outline how decisions are made, it becomes easier for developers and users to spot and address biases hidden in the data, algorithms, or processes. For example, offering clear explanations about the key factors influencing decisions can help highlight and correct unfair patterns.

It also helps build trust and accountability. When stakeholders can evaluate whether an AI system is operating ethically and fairly, confidence in the technology grows. Tools like interactive dashboards or visualizations can make it simpler to identify and fix biased behaviors, paving the way for fairer outcomes. In the end, transparency not only boosts user confidence but also supports more equitable decision-making.