AI Interface Design

AI vs. Manual Testing: Interaction Flow Validation

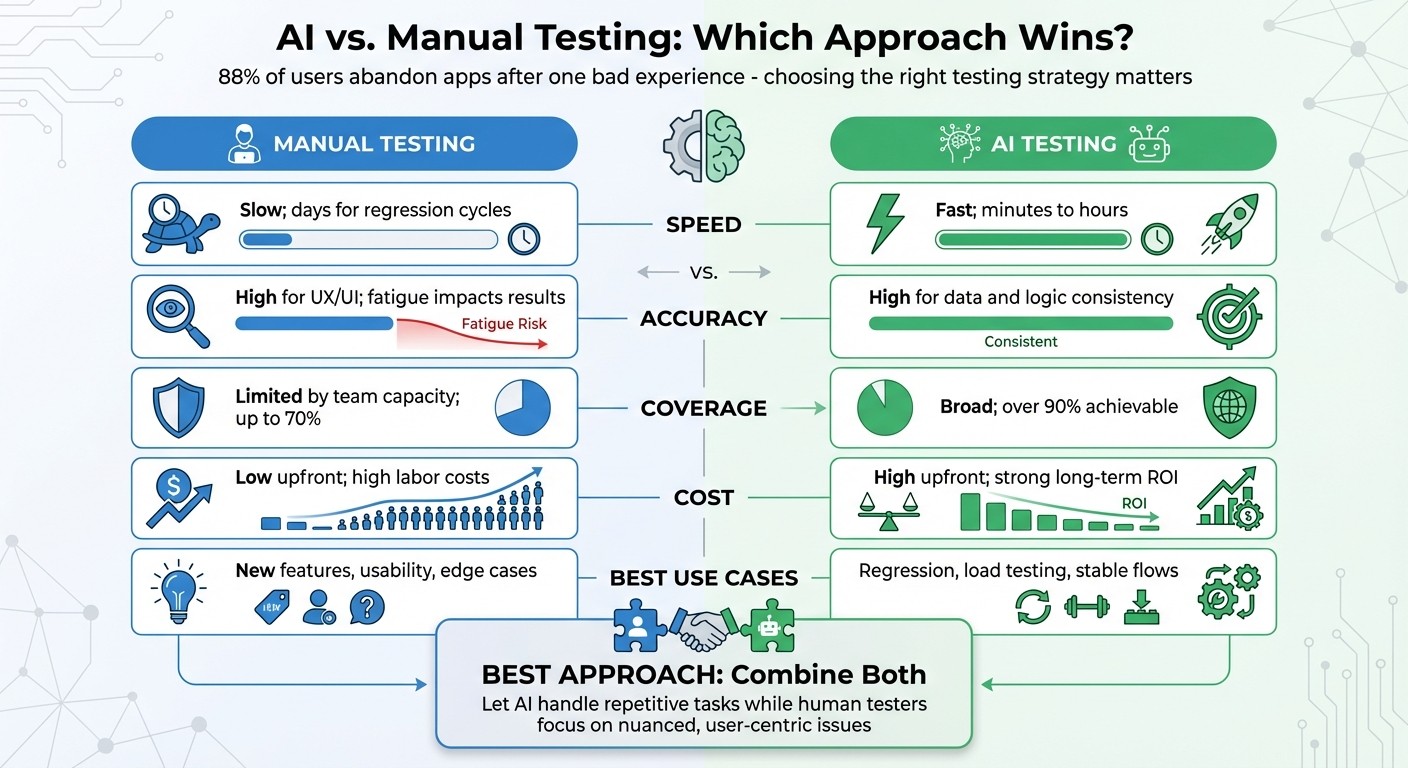

Compare AI and manual testing for interaction flows—speed, accuracy, coverage, cost, and when to use each in a hybrid QA approach.

88% of users abandon apps after one bad experience. A 1-second delay can cut conversions by 7%. Interaction flow validation ensures smooth, functional processes that keep users engaged. But should you rely on manual testing, AI-powered testing, or both? Here’s the breakdown:

Manual Testing: Great for assessing usability, design, and edge cases. Human testers bring intuition and empathy, making it ideal for new features or exploratory tasks. However, it’s slow, labor-intensive, and prone to errors.

AI Testing: Excels in speed, scalability, and repetitive tasks like regression testing. It reduces maintenance time and handles complex workflows efficiently. But it struggles with subjective judgment and ambiguous scenarios.

Quick Comparison

Aspect | Manual Testing | AI Testing |

|---|---|---|

Speed | Slow; days for regression cycles | Fast; minutes to hours |

Accuracy | High for UX/UI; fatigue impacts results | High for data and logic consistency |

Coverage | Limited by team capacity | Broad; over 90% achievable |

Cost | Low upfront; high labor costs | High upfront; strong long-term ROI |

Best Use Cases | New features, usability, edge cases | Regression, load testing, stable flows |

The best approach? Combine both. Let AI handle repetitive tasks while human testers focus on nuanced, user-centric issues. This hybrid strategy improves efficiency and ensures a better user experience.

AI vs Manual Testing: Speed, Accuracy, Coverage and Cost Comparison

The Future of AI-Based Test Automation

Manual Testing for Interaction Flows

Manual testing relies on people navigating an application to uncover issues. Unlike automated scripts that follow strict instructions, human testers bring intuition, empathy, and the ability to think like real users to the process.

For example, when a tester evaluates a checkout flow, they’re not just verifying functionality - they’re also assessing usability. Does the process feel natural? Are error messages clear? Is the interface easy for anyone to understand? These are the kinds of subjective judgments that machines just can’t make, but they’re crucial for ensuring a smooth interaction flow.

"Manual testing is actual humans clicking around your application, trying things out, seeing what breaks."

Deboshree Banerjee, Autify

Manual testing is especially effective in exploratory scenarios, where testers investigate edge cases without predefined scripts. This approach often uncovers unexpected issues that developers might not have anticipated. With around two-thirds of software development companies combining manual and automated testing - often in ratios like 75:25 or 50:50 - it’s clear that manual testing remains a key part of the quality assurance process.

Benefits of Manual Testing

One of the biggest advantages of manual testing is its flexibility. Human testers can instantly adapt to changes in the user interface, exploring new features or modified flows without needing to update scripts. This makes it especially useful for testing brand-new features with evolving requirements, where automating unstable functionality could lead to unnecessary maintenance headaches.

Another major strength is contextual judgment. A tester can identify when a technically "successful" flow still fails from a user’s perspective. They are also great at spotting visual issues - like font mismatches or awkwardly placed elements - that automated tools might overlook. Additionally, manual testing has a low upfront cost, as it doesn’t require special tools or scripting.

Drawbacks of Manual Testing

That said, manual testing comes with some notable challenges, especially in fast-paced environments.

For starters, it’s slow, labor-intensive, and prone to human error. A typical tester can handle about 50 test cases per day, but complex applications often have thousands of test cases. This can create bottlenecks, particularly in Agile or DevOps settings where frequent releases are the norm.

Repetition also takes its toll. Tasks like checking input fields across multiple forms can lead to fatigue, causing testers to miss subtle bugs or skip test cases altogether. Additionally, different testers might interpret requirements differently, leading to inconsistent results and potential confusion within teams.

As applications grow, the regression testing workload can become overwhelming. Each new release requires re-testing existing features, and the manual effort scales directly with the complexity of the application. For example, manually verifying data integrity across thousands of records is not only inefficient but also highly error-prone. With enterprise downtime costing upwards of $300,000 per hour, and production bugs being 100 times more expensive to fix than those caught during design, the stakes for thorough and efficient testing couldn’t be higher.

AI-Powered Testing for Interaction Flows

AI-powered testing is changing the way quality assurance (QA) is done. Instead of relying on manual clicks or rigid scripts, AI agents act like human testers, exploring applications by reading the DOM, identifying actionable elements, and triggering state changes autonomously. These systems leverage technologies like Multimodal Large Language Models (MLLMs), allowing them to understand on-screen content and decide what to test next - no predefined instructions required.

What makes AI testing stand out is its ability to adjust and learn. For instance, if a button's ID changes or a form field shifts, self-healing locators can use visual patterns and historical data to identify and remap the element during execution. This reduces the maintenance workload, which typically eats up 30% of a tester’s time. AI also focuses on high-risk areas by analyzing user behavior patterns and defect histories, ensuring testing efforts are spent where they matter most.

"AI pushes the boundaries of QA beyond what traditional manual and automated testing can achieve... The real value lies in its ability to make informed decisions by anticipating risks."

QA.tech

AI also brings unmatched scalability. It can simulate thousands of user interactions across different devices, languages, and accessibility requirements - all at once. A great example is Bug0, which reported in 2026 that their AI-powered browser testing service helped teams achieve 100% coverage on critical interaction flows in just 7 days. Another study from 2025 showed AI agents using models like Google Gemini 2.5 Pro and GPT-4o to generate 81 test cases for an e-commerce app, all for just $0.026.

Benefits of AI-Powered Testing

The most obvious advantage is speed. AI can execute thousands of test cases in a fraction of the time manual testing would take. This is especially valuable in Agile and DevOps workflows, where rapid releases are the norm. Self-healing mechanisms in AI testing reduce build failures by up to 40%, and AI-generated test cases have a flakiness rate of just 8.3% - well within accepted industry standards.

Another key benefit is scalability. Over 70% of QA teams are already using AI tools to improve coverage. AI doesn’t just test faster; it tests smarter, covering complex interaction flows across browsers, screen sizes, and user scenarios - all without requiring more team members. This is a game-changer for teams struggling with limited test coverage, a problem reported by 55.6% of developers.

AI also excels at spotting patterns and anomalies. For example, visual regression testing powered by AI uses techniques like the Structural Similarity Index (SSIM) to detect layout changes while ignoring minor variations like anti-aliasing. One SaaS team reduced escaped UI regressions by 62% after integrating AI into 180 key interaction flows. Beyond that, AI can flag potential bugs before they escalate, saving teams from the higher costs of fixing issues in production.

Perhaps one of the most exciting aspects is how AI makes testing accessible to non-technical team members. With natural language processing, product managers or designers can write test scenarios in plain English, which AI then converts into executable tests. This shift turns QA into a collaborative effort rather than a bottleneck managed solely by engineers.

Drawbacks of AI Testing

Despite its advantages, AI-powered testing has its challenges.

One major issue is the "black box" nature of many AI models. Testers often can't see why certain test cases were chosen or failed, making it harder to reproduce bugs and sometimes causing friction between QA and development teams.

"Flaky AI tests can be worse than flaky manual ones, because they create false confidence and waste developer time. The future is hybrid."

Syed Fazle Rahman, Co-founder, Bug0

Technical issues can also arise. AI agents sometimes struggle with outdated element references or DOM changes during execution, occasionally interacting with the wrong elements. In long test runs, AI might lose context or generate irrelevant test cases, increasing costs without adding value. Even self-healing tests aren’t foolproof - they can drift over time, requiring manual corrections.

Another limitation is AI’s lack of contextual understanding. While it can confirm that a button works, it can’t judge whether the interaction feels intuitive or if error messages are clear enough. This becomes a problem for applications with complex business logic or workflows requiring deep domain expertise.

Finally, there are setup and security concerns. Configuring AI testing frameworks and training models requires significant upfront investment. Additionally, traditional AI testing tools may unintentionally expose sensitive data like session tokens or login credentials through LLM prompts. To avoid this, teams should adopt security-first practices, such as injecting credentials directly into browser instances rather than through AI models. While the setup can be complex, the long-term benefits often outweigh the initial challenges.

Key Differences Between AI and Manual Testing

The distinction between AI and manual testing goes beyond speed - it's about how each approach fits into your workflow and how you allocate your team's efforts. Manual testing is constrained by human capacity, while AI can execute thousands of test cases simultaneously without breaking a sweat. For instance, a regression suite that might take 5–10 days to complete manually can be finished in under an hour with AI-powered tools.

But speed isn't the whole story. Manual testing is invaluable in situations requiring human judgment, like assessing the clarity of error messages or the usability of a new feature. On the other hand, AI excels in areas requiring machine precision, such as testing hundreds of username/password combinations. The real difference lies in the specific testing requirements and when they're needed.

Comparison Table

Dimension | Manual Testing | AI-Powered Testing |

|---|---|---|

Execution Speed | Slow; limited by human capacity (days for regression) | Extremely fast; parallel processing (minutes/hours) |

Detection Accuracy | High for UX/UI; susceptible to fatigue in repetitive tasks | High for data/logic; avoids human fatigue |

Test Coverage | Typically up to 70% | Can exceed 90% |

Cost Profile | Low upfront; high long-term labor costs | High upfront (licenses/setup); strong long-term ROI |

Scalability | Limited by team size and capacity | Highly scalable with cloud resources |

Adaptability | Effective for creative logic; less so for routine UI updates | Strong for UI changes (self-healing) and complex data |

Maintenance | Requires manual updates for every UI change | Reduces effort by 70–80% with self-healing scripts |

Best Use Case | Exploratory, usability, and new features | Regression, load testing, and stable flows |

These differences don't just influence testing metrics - they reshape the way development teams work.

Impact on Workflow

The choice between manual and AI testing has a profound effect on development workflows. Each approach brings unique strengths to identifying flaws, but their integration into the development cycle varies significantly. Manual testing often becomes a bottleneck, especially toward the end of a project. QA teams wait for builds, execute tests, and log bugs in a reactive process that can delay releases by days or even weeks. AI flips this model by integrating seamlessly into CI/CD pipelines, delivering feedback almost instantly after code is committed. Developers can quickly identify and address issues in minutes rather than days.

This change also shifts how resources are allocated. Currently, about 43% of software developers spend part of their time on testing. AI tools reduce this burden, allowing developers to focus on building features instead of managing repetitive test processes. QA teams, in turn, can move away from routine test execution and concentrate on higher-level tasks like exploratory testing and addressing edge cases that require human insight.

"Automation isn't about replacing human testers. It's about letting those human testers focus on the interesting problems while computers handle the tedious stuff."

Deboshree Banerjee, Autify

The industry is already embracing this shift. By late 2024, 54% of U.S. tech companies are either using or planning to adopt AI coding agents within six months. The global automation testing market reflects this momentum, projected to grow from $12.6 billion in 2019 to $55.2 billion by 2028. This evolution is transforming how software quality is managed and tested.

When to Use Each Approach

Deciding between AI and manual testing isn’t about which is better - it’s about choosing what fits your development cycle, the stability of your features, and the specific testing needs. Let’s break down when each method works best, based on their strengths and limitations.

Best Scenarios for Manual Testing

Manual testing is your go-to for new features or when specifications are still evolving. Human testers excel in applying judgment and empathy, which are crucial when refining user experiences or adapting to rapidly changing requirements. Unlike AI, they don’t rely on predefined scripts, making them flexible in dynamic environments.

This approach is also perfect for exploratory testing. Testers can mimic unpredictable user behavior and navigate non-linear paths that AI simply can’t replicate. Usability assessments - like gauging how intuitive an interface feels, evaluating error messages, or ensuring a feature aligns with your brand - rely heavily on human intuition. Manual testing also proves invaluable for accessibility audits and uncovering edge cases that demand subjective evaluation.

For small-scale or short-term projects with limited scope, manual testing offers a practical solution. It requires minimal upfront investment and delivers quick results for narrowly focused testing objectives.

"Manual testing provides irreplaceable value for exploratory discovery, usability assessment, and creative investigation." - Virtuoso QA

As your features stabilize and the project scales, that’s when AI-powered testing starts to take center stage.

Best Scenarios for AI-Powered Testing

AI-powered testing shines once your features are stable and you’ve moved into the maintenance phase. It’s especially effective for repetitive regression cycles on established workflows. For instance, AI can complete tasks that would take over 11 days manually in just under 1.5 hours - a jaw-dropping 64x speed improvement. This makes it a key player in Continuous Integration/Continuous Deployment (CI/CD) pipelines, where quick feedback is essential during frequent builds.

If you’re managing large-scale projects with complex integrations, AI is a lifesaver. It handles thousands of test cases, cross-browser validations, and high-volume data scenarios effortlessly - areas where manual teams might falter. For example, Selenium users reportedly spend 80% of their time on test maintenance, but AI’s self-healing capabilities can cut that workload by 70% to 80%. In high-stakes industries like finance or healthcare, AI takes care of repetitive compliance and regression testing, freeing up human testers to focus on exploratory tasks.

AI also excels in ensuring technical accuracy at scale. Whether it’s validating hundreds of username/password combinations, stress-testing system performance, or maintaining consistency across a wide range of devices, AI delivers unmatched efficiency. The growing demand for such capabilities is reflected in the automated testing market, valued at $20 billion in 2022 and projected to grow at a 15% annual rate through 2032.

"The art of quality lies in blending human intuition, machine speed, and intelligent prediction." - QA.tech

Combining AI and Manual Testing

The real magic happens when you blend the strengths of AI with the expertise of manual testing. Together, they create a testing strategy that’s both efficient and insightful. AI takes care of repetitive, time-consuming tasks, while manual testers focus on areas where human qualities - like intuition, empathy, and creativity - make all the difference. This balanced approach not only boosts efficiency but also gives your testing process a distinct edge.

Human-in-the-Loop Testing

Human-in-the-Loop (HITL) testing is all about smart collaboration between humans and AI. Here’s how it works: AI handles repetitive tasks, such as running regression suites or smoke tests, while humans oversee the results to ensure they align with business goals, security needs, and brand standards.

The system relies on confidence thresholds. When AI encounters something uncertain - like an edge case or a low-confidence result - it flags the issue for human review. For instance, Tradesmen Agency applied this concept to their invoice processing system. Using tools like Llama Parse and large language models, the system extracts data but routes exceptions (like missing fields or low-confidence validations) to a manual review queue. Human reviewers correct these issues, and their input is used to train the AI, making it smarter and more accurate over time.

This cooperative framework ensures a smooth integration of AI and manual efforts, combining speed with precision.

How to Integrate Both Methods

To get the most out of this hybrid approach, start by automating stable, repetitive tasks like regression testing. This frees up manual testers to focus on exploratory and creative testing areas. Take advantage of AI’s self-healing capabilities to reduce the time spent on test maintenance.

Set up clear workflows for approval and escalation. For example, you might route high-stakes tasks - like failed logins or high-value transactions - from AI to human specialists.

"AI replaces tasks, not people, unless your job is literally 'Copy-Paste'." - Shilpa Prabhudesai, testRigor

Another game-changer? Natural Language Processing (NLP) tools. These allow non-technical team members to create automated tests using plain English. This way, everyone on your team can contribute to automation without needing to know how to code. And it’s not just a trend - by 2027, 80% of enterprises are expected to use AI-augmented testing tools, a massive jump from just 15% in 2023.

The real challenge isn’t deciding whether to combine AI and manual testing - it’s figuring out how quickly your team can adapt to this powerful combination.

Conclusion

Deciding between AI and manual testing comes down to leveraging the strengths of each where they shine. Manual testing is indispensable for uncovering subtle, nuanced issues through exploratory testing, validating usability, and addressing problems that require human intuition to detect. On the other hand, AI testing excels in delivering fast, consistent regression checks and handling data validation tasks that far exceed the limits of manual efforts.

Adopting a hybrid approach - where AI provides speed and scale and manual testing brings human judgment - can lead to significant efficiency gains. For instance, this strategy can reduce regression testing time by as much as 80% and cut maintenance overhead by 70–80%. Combining these strengths creates a well-rounded and effective testing process.

"The question isn't 'automated OR manual' - it's 'automated WHERE and manual WHERE'." - Ardura Consulting

This balance is especially important because even achieving 90% automation coverage doesn't ensure flawless user interactions. While AI can confirm that a button works, only human testers can assess whether the overall user experience feels natural or frustrating.

FAQs

How can AI and manual testing work together effectively in a testing strategy?

To build a solid testing strategy, it's smart to blend AI and manual testing, taking advantage of what each does best. AI shines when it comes to repetitive tasks like regression and load testing, offering speed and accuracy. Meanwhile, manual testing plays a critical role in areas like usability, edge cases, and user experience - places where human intuition makes all the difference.

Using AI tools alongside manual testers can streamline workflows and free up time for more complex, judgment-heavy scenarios. For example, AI can pinpoint potential problem areas or propose test cases, while manual testers focus on validating the overall user experience. This combination boosts test coverage, shortens development cycles, and elevates software quality by balancing automation's precision with human insight.

What are the key benefits of using AI-powered testing instead of manual testing?

AI-powered testing brings several advantages compared to manual testing. For starters, it ensures consistent performance by removing the risk of human errors and executing tests uniformly every time. This reliability is a game-changer for maintaining quality across projects.

Another perk is its ability to generate test cases automatically, cutting down on repetitive tasks and freeing up time for developers and testers to focus on more strategic work. This automation not only speeds up the process but also reduces the burden of routine tasks.

What sets AI apart is its ability to learn from past data, which helps refine test accuracy and efficiency over time. This makes it particularly useful for managing intricate interaction flows, especially in dynamic settings like AI-driven tools or SaaS platforms. By simplifying the testing workflow, AI empowers teams to channel their energy into innovation and improving user experiences.

When is manual testing better than AI-powered testing?

Manual testing shines in situations where human insight, creativity, and intuition play a key role. This approach works best for tasks like exploratory testing, usability assessments, and ad-hoc testing - especially during the early development phases when designs and features are constantly evolving. Human testers excel at catching subtle design flaws, user experience hiccups, or interface frustrations because they can interpret context and user behavior in ways that AI cannot.

It's also the go-to method for complex, one-off scenarios or dynamic environments that demand human judgment. For instance, testing new features or verifying how interaction flows work often requires creative problem-solving and flexibility - qualities that are hard to replicate with automation. While automation is fantastic for repetitive or large-scale tasks, manual testing is irreplaceable when it comes to ensuring software meets user expectations and creates a smooth, enjoyable experience.